No doubt, when creating software, there is always one topic that everybody talks about: performance. In this respect, even though Windows tries to hide a lot of performance optimization work from the developer’s eyes (when developing for .NET with WPF), there are still a dozen of issues to be kept in mind when implementing a piece of software.

To start things off slowly: How does computer science define performance? Spoken very generally it is formally described as “the ability of software to complete certain tasks” (see Wikipedia). Most commonly, however, it is simply referred to as the speed of software. In this case, people usually do not differentiate between the user interface’s performance and the performance of the application logic itself.

Nonetheless, inside a development team there should be a clear understanding of who is responsible for what performance aspects, rather than pushing away all responsibilities to a single developer alone. Even though performance certainly affects the entire application, many advantages can be gained by distributing optimization tasks to different people regarding their expertise and specialization. For this reason I, as a Design Engineer, put significant effort in performance analyses for our customers and while our customers focus on optimization of C#-based Code, such as the user interface logic or other respective layers below, my area of expertise focuses on optimization of XAML Code.

Identifying performance problems

In order to optimize user interface performance, naturally those areas that cause trouble need to be detected and localized. Without appropriate data a performance analysis gets problematical or – to be more explicit –since development and target system rarely match, “playing around” really gets you nowhere.

To get useful and valid data for .NET based software systems, there are, however, a couple of useful tools. Probably the most famous one is the WPF Performance Suite, which is included in the Windows SDK.

To create a performance profile of an application, the user has the following tools at hand:

Perforator

Perforator especially helps in analyzing the rendering behavior of an application. By exploiting different types of diagrams a developer can perform a thorough analysis of rendering processes. By doing so, performance issues such as peaks in the CPU capacity can be identified quickly. Making further use of the numerous setting options, it is possible to simulate different configurations of an application, such as runtime behavior with or without opacity effects. Thankfully, as WPF relies on a rendering technique called “dirty regions”, only areas that underwent a change are redrawn. The operation “Show dirty-region update overlay” turns on coloring of those areas that are bound to change during runtime. For UI design engineers, this information gives a first outline, which elements of the application might have a negative impact on the overall performance. At that stage, small changes, such as exchanging a layout container may already lead to small boosts in performance.

Shown below is a screenshot of Perforator, which illustrates the difference between an application with many and one with little rendering operations.

The screenshot also depicts an extreme configuration, as the operation “Disable dirty region support” is checked (circled in red). As a consequence, the entire screen is completely redrawn every time an action is performed. Whether this scenario actually occurs in the application at hand can be figured out by using the operation described earlier (circled in blue).

The need for redrawing the entire screen arises when elements are animated by the use of Expression Blend’s FluidLayout behavior, for example. While being able to animate properties that do not per se allow smooth transitions (such as “Visibility”) it literally eats away performance. One drawing process in this demo application took up to 79 rects/s, which were changed in the process of an expanding navigation bar. If this animation is instead implemented by using the FluidLayout, which in turn installs a custom visual state manager, the entire window will be redrawn, resulting in the need for 231 rects/ s for the same animation. Keeping the application’s minimal requirements in mind, one can imagine the drastic performance loss, when used in a complex business application. Therefore, especially when it comes to animation, it is important to find a good balance between “a sexy UI” and reasonable performance. Additionally, the use of animation is also restricted by their contribution to the perceived comprehension of a User Interface.

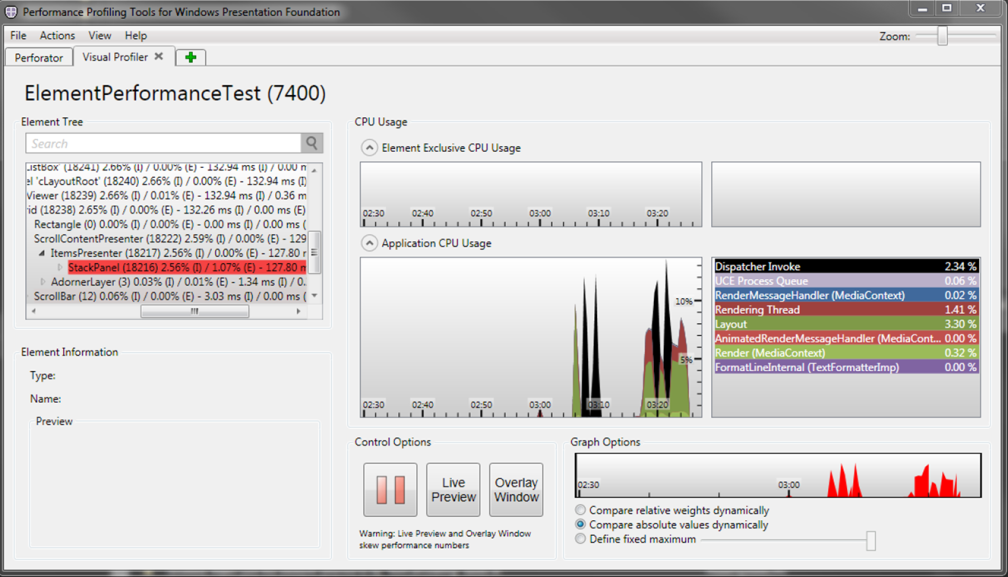

Visual Profiler

The second tool in the WPF Performance Suite provides the means to identify single UI Elements which cause significant performance bottlenecks. The Visual Profiler provides a tree view, which allows for easy exploring of the application’s visual tree. Elements, which have a negative impact on the performance, are colored in shades of red according to their consumption of resources. Darker tones of red naturally represent higher consumption of resources.

The screenshot shows data from the analysis of a StackPanel, which is used in the ItemsPanelTemplate of a ListBox. Noteworthy is the area on the right, which details CPU workload and visually presents it as a diagram.

Detailed information about the WPF Performance Suite is available at Microsoft.

DotTrace

Another useful tool to track down performance problems is JetBrains’ DotTrace, which also provides features that survey memory consumption. This is of relevance as high memory consumption mostly hints at instantiation of many heavy-weight objects and as every instantiation of an object takes time, this again results in a loss of performance. Whereas the WPF Performance Suite is top notch when analyzing performance of the view layer, the DotTrace Profiler targets at optimizing C# Code. During its analysis, it also unfolds the entire “Call Stack” of the application along with the quantity and time of each call. Several calls of the same constructor might for example indicate errors in the virtualization of a certain list. In order to use the DotTrace successfully, one should, however, have a basic knowledge about the C# classes of the application.

More information is available at JetBrains.

What rules of thumb should a Design Engineer adhere to in order to enhance performance?

As we have already mentioned, there are quite a dozen of potential performance problems that may arise in an application. Most problems are, however, no undefeatable hurdle, as I will explain in the following segment. Moreover, Microsoft has been busy enhancing the general performance since the introduction of .NET 4.0, which is especially reflected in the handling of pixel shaders and rendering of complexly styled elements.

Effects

First off, with .NET 4.0 it is possible to develop effects that are based on the pixel shader 3.0 model. In contrast to older releases, version 3.0 comes with a drastically improved shader model. In practical terms that means there are even more effects that support hardware acceleration, which, back on topic, offer a better performance when compared to effects rendered solely by the software. To put it simply: while the graphics card handles rendering, the processor can follow its actual purpose of dealing with computational stuff. In spite of that, a UI design engineer should always consider if there is an easier way of realizing a visual effect. Using a resource killer such as the WPF drop shadow effect to draw a subtle 1 pixel shadow below a label can be avoided by creating a second differently colored label with a respective offset. Simplifications such as these, have proven very useful in practice and should therefore be considered when styling an application.

Caching

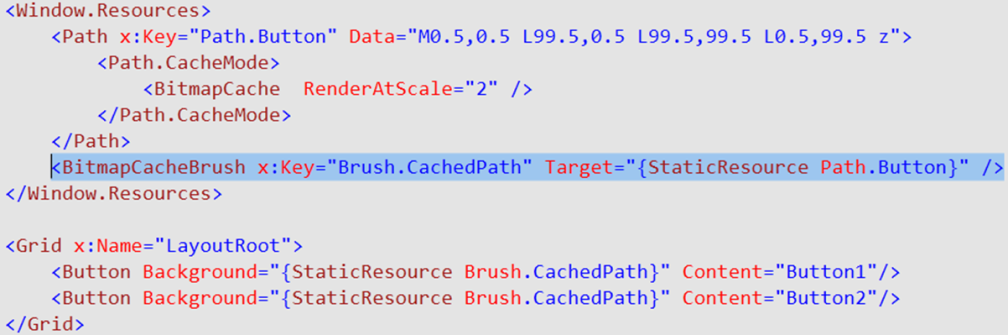

Another way of enhancing performance lies within the magics of the class BitmapCache. It provides a caching option for visually complex UI Elements, so that they are stored and rendered as plain bitmaps. This bitmap caching option can be turned on for every UI Element by setting the CacheMode Property within XAML Code, as can be seen below.

Especially for animations this leads to a significant reduction of rendering expenses. Still, attention should be paid to the fact, that elements remain interactive and will consequently receive all WPF events, such as mouse clicks or keyboard inputs. In those cases, the cache will be flushed and the element will be redrawn.

Scaling of objects without flushing the cache is however possible. By using the RenderAtScale Property, bitmaps are rendered at a certain scaling factor. By default however the element is saved in its original size (at 96ppi). It is recommended to keep a close eye on the results to identify potential visual side effects. For example, one should refrain from using Bitmap Caching on elements that contain texts, as it will certainly affect readability. Moreover, the number of UI elements being buffered should not grow too high to avoid problems with the working memory. An essential aspect in using the Bitmap Caching is the element’s layout. Changing the layout of a control results in the bitmap cache being flushed – an operation that is pretty expensive. As a consequence, an element’s layout should be changed as little as possible during runtime, or even better, not at all, when bitmap caching is active. To summarize it: with bitmap caching enabled, the operation RenderTransform can be used without concern, as opposed to LayoutTransform.

Microsoft offers another way to temporary buffer elements. The class BitmapCacheBrush is able to define a buffered BitmapCache element as BitmapCacheBrush, which for instance can be applied as background to several elements.

Reusing a buffered element and consequently minimizing drawing efforts will also result in a better performance.

Consequent use of the described classes can reduce CPU load up to a factor of three in some cases.

Lists

Usually performance bottlenecks occur when the application needs to visualize a lot of data. UI controls that are frequently used to display data on a User Interface in WPF are ListBox, ItemsControl and DataGrid.

Talking about a list’s scrolling performance is a very important issue. The class VirtualizingPanel offers a way to suppress instantiation of elements in a list, until they actually become visible. This results in a major improvement of runtime behavior, especially regarding the loading times of a list. The bottom line is, when overwriting an ItemsPanel of a list, it is recommended to keep the VirtualizingStackPanel (which is the default item’s host) as long as possible.

In addition, the VirtualizationMode Property of VirtualizingStackPanel offers a good improvement regarding scrolling performance. By default WPF discards list elements as soon as they disappear and consequently recreates them if they scroll back into view. This default behavior, however, is not the best solution at all times. In practice, we often came to realize, that the same element containers are repeated inside a list. In that case, it is worth a thought to disable the constant discarding and recreating of list elements. Setting the property VirtualizationMode to “Recycling”, results in the reusing of element containers.

The code snippet above may get you an improvement of up to 40% regarding scrolling performance.

Difficulties will most likely arise, if the scrolling of a list needs to be per pixel, rather than per element. An easy solution comes with setting the CanContentScroll property to false. Even though this will result in scrolling to feel very smooth, there is also an unwanted side effect: the big disadvantage of the property is that virtualization will be deactivated, even if a VirtualizingPanel is used in the ItemsPanel of the list. Accordingly, when styling a list to support smooth scrolling, caution is advised.

Resource Dictionaries

A very important issue in big software projects is resource management through ResourceDictonaries. A specialty of WPF, whether we like or not, is the fact that ResourceDictionaries are re-instantiated, every time they are included in a View via a MergedDictionary statement. Keeping in mind that frameworks such as Prism dynamically compose views by dynamically instantiating other views, showcases that organization of ResourceDictionaries has to be well-planned. To better illustrate the complexity of the problem, the View below details how it could all play out in a real project:

Frequent duplication of ResourceDictionaries in an application will therefore most likely result in enormous memory consumption. As WPF is infamous for this “problem”, Google spits out quite a dozen of suggestions, which claim to give an easy solution. Not all of these do, however, qualify as being practicable.

At first sight, a very promising solution seems to be the use of a SharedResourceDictionary, which iniates every ResourceDictionary only once and then references these shared instances. This comes, however, with a huge drawback: every ResourceDictionary is tagged with a reference to its owning view and the memory that it used up by this view cannot be freed by the GarbageCollector. This in turn can result in drastic memory leaks, which can’t be resolved easily.

In general, resources, which are used more than once, should be included globally via App.xaml.

Furthermore, the method of referencing resources in XAML Code has an impact on runtime behavior. WPF offers two different approaches:

1) When using DynamicResources – as Expression Blend does automatically – it comes with the following effects: while resources may be exchanged during runtime, this also implies continuous reloading of resources every time the view is loaded. So with greater flexibility on the one hand, potentially longer loading times come on the other.

2) StaticResources, are completely loaded on startup of an application and there are no additional loading times when a view is opened. Of course, StaticResources also come with a drawback in that they result in longer loading times on startup of the application.

Ideally the decision for either resource type should be made ahead of development, depending on preferences regarding startup and runtime loading times.

Trigger vs. Visual States

When I was new to developing with WPF, I often wondered why WPF default templates always visualize States such as “Pressed” with the help of a triggers rather than VisualStates, which are available through their respective VisualStateGroups. When thinking in terms of performance, however, the reason is quite obvious. Every visual state change starts up a storyboard that in turn contains further timeline objects. Naturally this consumes more time and memory than simply setting a property in a trigger. As a consequence, VisualStates should be handled with care if performance is an issue.

Finally, I want to share an easy to use advice to optimize a user interface. When defining a layout, large quantities of elements in the visual tree, may lead to a significant loss in performance. To avoid this, one should tend to create flat container hierarchies. Also the type of the container itself should not be picked at random or out of personal preference. As far as possible, heavy weighted ContentControls should be avoided. This simple rule is especially valuable when creating Control Templates, since these templates are often reused. Assigning a template to ten buttons, which in turn contains ten elements, sums up to one hundred elements in total.

Summary

To sum things up, performance optimization of a WPF-based .NET application is not something that only a single developer should be responsible for. Improvement of runtime behavior is something to be done across different specializations and competences to achieve an ideal final result. And that is the reason, why performance analyses and optimizations are part of a UI design engineer’s everyday work. At least at Centigrade.

Microsoft, Windows, Expression Blend and Expression are trademarks or registered trademarks of Microsoft Corporation in the US and/or other countries.