Welcome to my first blog post ever. How did we get here?

I’ve recently joined Centigrade and (at the risk of this sounding like an ad) I can truly say that it has been a great experience. Employees are not just treated like a “human resource” but like real people. I’ve been given autonomy, responsibility and above all trust. I have to say that really feels great! Instead of overloading me as a new employee with a million projects, I’ve been onboarded to just one and I’ve had the opportunity to research a topic of my choice and write this blog post about it. UX metrics have always been of interest to me, particularly how to integrate it at a low cost/effort in order to get more stakeholder buy in so it was an easy choice for me. A quick note before I get started for the spelling sharks among us: I always capitalize User for obvious reasons, so don’t get hung up on that. Here we go!

Why UX metrics?

First, let’s start with a definition. I like this one:

“UX metrics are a set of quantitative data that are used to measure, compare and improve the User experience over time.” [1]

For me, what stands out here, is the “over time” part. It means, in order to employ UX metrics, you need to convince your stakeholders to invest in continuous manual and automated research in order to obtain valuable data. So probably that would be the first important step to do. Let’s look further of why I think UX metrics are an invaluable tool in the UX expert’s toolkit.

1. Qualitative vs Quantitative

Qualitative research is great to better understand our users, generate ideas, navigate the problem space and come up with solutions. In short, qualitative data answers the Why? But how do we measure how bad of a usability problem we have discovered really is, using some kind of scale? Have we managed to improve our digital product over time and how do we select the best of different solutions? That’s where quantitative data comes in, it answers the “How many?”, “How often?” and “How much?” questions.

2. Buy-in

So, you’ve studied User research or you work with dedicated User researchers, who have collected some years of experience, are aware of biases and how to avoid them (see my colleague’s article), have run tight scientific studies and then together created well-backed findings and solutions, summarized them in an appealing way and honed your storytelling skills so that you present your insights interactively and convincingly to your business stakeholders. Yet you’re still not getting the buy-in from them? I promise, you’re not alone. Getting the buy-in from stakeholders solely based on qualitative results can be tricky. But once we can quantify the problems that Users are facing, answer the How many? question, we speak the stakeholder’s language. Metrics are the translation of User research into tangible, comparable numbers and visually appealing graphs. And who could stand the persuasiveness of a graph well-done? As User experience professionals, we always consider our audience. So why not in the case of our stakeholders?

3. Pearson’s Law

Karl Pearson an academic in statistics that was world renowned for his insights. Pearson’s law states

“When performance is measured, performance improves. When performance is measured and reported back, the rate of improvement accelerates.”

In other words, measuring User experience, will not just get you stakeholder buy-in but also actually improve the User experience. And if you include business stakeholders in your metrics and report back to them about the metric’s trends over time, User experience will improve even faster. Improving the User experience being the ultimate goal of every User experience professional, I can find no better reason to start using UX metrics as soon as possible.

So, let’s explore with which metrics and how to best get started employing metrics in projects.

What UX metrics are there?

There are plenty of different metrics, that I will not go into detail in this blog post. What I will do instead, is identify the – in my opinion – metrics that are the simplest to measure and get started with. Metrics that don’t employ very complicated formulas, require a lot of coding or specialized equipment. If you already conduct User research, especially continuously, the metrics I’ve identified shouldn’t be too difficult to implement into your workflows.

Metrics in general can be grouped by their different measuring goals. There are different naming conventions that different frameworks use, and they can be sometimes grouped slightly differently, but I like this summary of UX measuring goals: Performance, Preference, Perception [2].

- Performance metrics are employed to measure how well the User can achieve their goals.

- Preference metrics measure what the User prefers or likes.

- Perception metrics measure what the User thinks. In order to get a better and more accurate understanding of the User experience of your product, it is always a good idea to choose a mix of metrics from these three categories.

As always in user research, we must keep in mind biases and differentiate between metrics that measure what the User says (attitudinal) and what the User actually does (behavioral). It is always best to use a mix of attitudinal and behavioral metrics in order to get more accurate results.

Here we go with a list of the easier metrics to get started with. I’ve grouped them by their measuring goals as well as divided them into behavioral and attitudinal metrics so that you can choose them more easily and try and build you own metric framework that can measure the User experience of your project most accurately.

Performance

Behavioral metrics: Task success rate, task completion time, task error rate

These measurements are simply calculated averages of the success rate, completion time or error rate across all Users. If you don’t do continuous User research or don’t have the buy-in for User testing (yet), it is often easier to measure the error rate, since all User experiences throw error messages, and simple code hooks can be employed to count these. Remember to keep in mind your error margin (that can be calculated be using a simple online calculator).

Attitudinal metrics: SEQ or SUS (expectation/pre task and experience/post task)

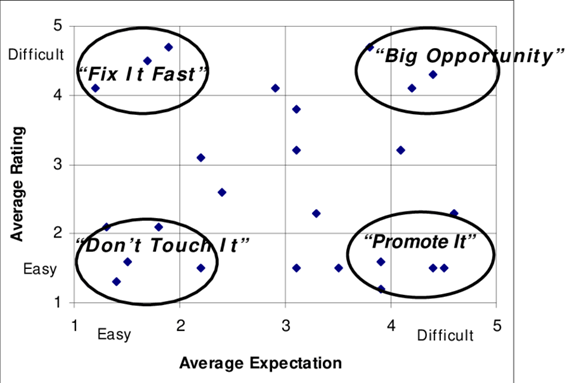

SUS stands for System Usability Scale and is a common way of measuring the Users perceptions. SEQ is a very simplified (one question) version of the SUS, so it might be the easier one to get started with. Here is an example on how we applied it to a metaverse research project. The trick to turning this perception measure into a performance measure is to ask it twice. If we ask the User to fill in the survey before completing a task and again afterwards, it can be used as a measurement of performance. If we complete this survey for more than one task and input the data of different tasks in a graph, it can even give a visual representation of what issues to prioritize. You can find an example below.

Albert, William & Dixon, E. (2003). Is this what you expected? The use of expectation measures in usability testing.

Preference

Behavioral & Attitudinal: Prototype, A/B Testing or multivariant testing

When testing the User’s preference, we need to think of the return on investment (ROI). In the beginning or your product journey, there are many open questions, and we can’t just start off with an A/B test of two button placement variants. That would be assuming that the journey to get to that button is what the User prefers. Hence it is most common to start with a prototype test and then work our way to the A/B test for detail improvement. The following graph offers a great visualization, what kind of User test is how appropriate in what phase of your project in order to reduce the risks of assumptions and uncertainty.

The Fountain Institute: Getting started in User testing and experimentation as Designers Guide (thefountaininstitute.com/blog/getting-started-in-testing-and-experimentation-a-designers-guide)

Even after presenting the above graph to your stakeholders, it’s possible that due to a gap in understanding of UX, you have no buy-in for User testing, then maybe this will help to convince stakeholders:

Statistically you can catch 85% of usability issues with only five Users [3] (or depending on the complexity of your project, five users per persona, read more here) so a low-fi prototype test doesn’t take as much effort or time as your stakeholders might think. Always remind them: testing early is cheaper than developing a product based on assumptions and finding out that it doesn’t solve the User’s problems.

Perception

Behavioral: Tapping while completing task

There are many tools today that make the observation of user behavior easy, even without complicated equipment. I still find this very simple tool to measure the cognitive load that a User is experiencing while completing a task very efficient: Have your Users tapped their finger repetitively and quite fast while they’re completing a task? When their cognitive load increases, their tapping frequency will slow down or even stop momentarily while they need to focus entirely on the task.

Attitudinal: SEQ or SUS (w/competitors)

I already introduced the SEQ and SUS surveys earlier. These are great existing tools that already have established general guidelines on how to interpret the scores of participants:

Hadi Althas 2018: How to Measure Product Usability with the System Usability Scale (SUS) Score (uxplanet.org/how-to-measure-product-usability-with-the-system-usability-scale-sus-scoref)

These categories are very easy for stakeholders to understand and that’s why we can create a very convincing graph with them.

And that’s it. Yes, these are really the first metrics you (and I) could get started with, that are not all that tricky to implement. If you’re a pragmatist, like me, then you’re probably asking yourself now: Okay but what does that really look like? What’s my first step here? Which one do I start with?

How to really get started?

I wish I could now introduce you to one framework, like the Google HEART framework, USER framework or any other framework and tell you, just implement these metrics one by one, and you’re good to go! However, none of such frameworks works for all projects. For example, the Google HEART framework uses different metrics to measure Happiness, Engagement, Adoption, Retention and Task success and while that might work great for Google or other B2C products, adoption and retention measurements often don’t apply to B2B products.

In Jared Spool’s words

“Unfortunately, the theory that a grand unified metric can tell us how well our products and services are doing is just a myth. No such metric actually exists. There are many ways to measure success.”

And in my words: you need to come up with your own metrics to measure (and define) success for each project – or even for each usage context.

Measuring your goals is possible on different levels as pictured in the graph below. If we measure a goal that is too broad, it will be more difficult to identify what we can to in order to improve the metric. The more precise or further down in the levels we can go, the more accurately we can measure, evaluate and improve incrementally.

Centigrade (Thomas Immich, Britta Karn) 2020: Usage data analysis

In order to identify our possible metrics, Google’s Goals, Signals, Metrics process combined with our measuring goals of Performance, Perception and Preference could come in.

Goals Signals Metrics x Performance Perception Preference

Imagine you’re starting a new project, have conducted generative User research and have identified one or more goals for your persona(s), then you’ve already done your first step towards defining the metrics for your project. Yay! Now, using Goals, Signals, Metrics process and choosing at least two out of the Performance, Preference and Perception metrics while paying attention to using a mix of behavioral and attitudinal measures, you can easily create your very own first set of metrics to get started with.

That sounds a bit complicated but let’s have a look at how Goals Signals Metrics works and combine these with the Performance, Preference and Perception metrics.

Goals Signals Metrics is very simple: First you define the Goals you want to measure, then identify the Signals that show you that this goal was reached. Some others call these “objectives”, but the essence is the same. Signals can be multiples for the same goal. For example: In the image below, I’ve used the very general goal of the User being able to complete his task. Now for this there can be multiple signals. It could be that there is a low error rate, it could however also be that the time on task is low. So, we really need to be sure when to choose which signals. We should always ask ourselves, which Signals in fact signify the goal that we’re trying to measure and does it apply in the context of the User journey. (Sometimes a long time on task is preferable as long as the task is completed in the end, for example if the goal is for the User to explore their options). This also means that in the long run and to measure more accurately, it is actually even preferable to measure one goal by different signals (and me in order to make sure that they are moving in the same direction and correlate our data. In the short run or in order to get started with metrics, it is acceptable to measure our goal with one signal as long as we can be really sure that this signal in fact signifies if our goal is being reached.

On the board below, I’ve visualized how the metrics could look like for some very high level and general goals. Obviously, you don’t ever want to use general goals like these for your actual product and be as specific as possible instead. Remember that choosing your goals and what to measure is just as important as what you measure them by.

Buy-in starts with the implementation of a metric

Already when deciding on which metrics to measure, business stakeholders should be included so it is not just the UX team that owns the metric. Everyone should be aware of it and agree, that this is a metric that is useful, this is where using the metrics to improve stakeholder buy-in starts. It is a bit similar to an acceptance criteria or “definition of done” in an agile development context.

Goodhart’s law

Of course, it wouldn’t be User research, if there weren’t biases or laws that we need to keep in mind. Specifically: Goodhart’s law.

Goodhart’s law applied to UX metrics means that once we introduce a metric to measure a certain behavior, we can get so carried away with trying to improve the numbers that we forget why we chose it in the first place and if it still measures what we want to measure. Hence, it is important to continuously look at metrics from different angles, employ various different metrics in order to measure one goal and question the metrics we’ve chosen, especially over time.

So that’s almost it from me, I hope you enjoyed my blog post and that this will help you as much as it will help me when employing UX metrics in your projects. I don’t want to finish without giving credit where credit is due. My article is simply a summary of research many other UX experts have done, so thank you for that! You’ll find a detailed list of my sources below the post.

And be sure to check back as I’m looking forward to implementing my own suggestions in my current and future projects and reporting back on how it went in a UX Metrics 2.0 blog post!

—

[1] Ratkliff & Kelakar 2020: https://www.userzoom.com/ux-blog/what-ux-metrics-and-kpis-do-the-experts-use-to-measure-experience/

[2] Jeff Humble 2022: https://www.thefountaininstitute.com/free-masterclass-ux-metrics?utm_source=webinar&utm_medium=talk+slide&utm_campaign=DPE+Fall+2022

[3] Jakob Nielsen 2000: https://www.nngroup.com/articles/why-you-only-need-to-test-with-5-users/

The Fountain Institute: Choosing the Right Metrics https://www.youtube.com/watch?v=wBxnuk4sIns

Bill Albert, Tom Tullis 2008: Measuring the User Experience: Collecting, Analyzing, and Presenting UX Metrics

Ben Davison 2019: UX Metrics https://www.youtube.com/watch?v=PU5i-Y1m1l4

Jared Spool 2017: Is Design Metrically Opposed? https://www.youtube.com/watch?v=aMqgTAlpVVc&t=2646s