In recent years, so called “natural user interfaces” (NUI) have grown in popularity. More and more often, interaction via touch and gestures is employed instead of using mouse and keyboard. The iPhone was greeted with great enthusiasm and played a major part in spreading touch screen system in the consumer market while also introduced gesture-based interaction in a playful way. There should be hardly any touch screen user who is not familiar with the pinch gesture that is used to resize or zoom images on the iPhone.

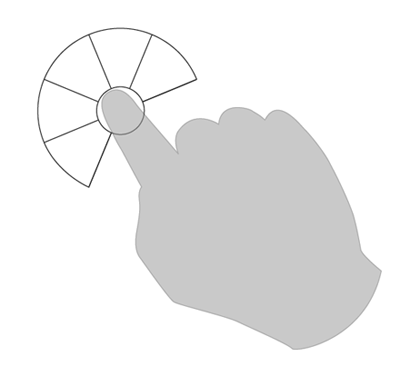

Pinch gesture

Natural user interfaces are trending in the consumer sector. This is especially true for gaming: with the Nintendo Wii, actions are not performed by pressing a button but with an actual movement with the hand that holds the controller. Microsoft Project Natal even discards controllers completely and is based on interaction without any special input devices (free-form gestural interface).

Differences in interaction

This trend towards natural user interfaces forces developers and interaction designers to rethink interaction paradigms. Until recently, most application were designed for interaction with devices such as mouse or keyboard. Current multitouch devices on the other hand are primarily used via hand-based or finger-based interaction. This means that most existing application cannot simply be ported onto a touch screen based system, because fingers as “input devices” have different characteristics than mouse, keyboard or pen. And because a touch screen system offers more options for interaction, it is much more implicit.

A mouse has a very limited number of interaction options and pressing the left mouse button triggers most actions. With a touch screen, on the other hand, the is no dedicated input “device” (apart from the screen itself) and the user has to find out how to interact with the system. This can be done by watching others perform interactions, by reading a manual or by exploring the system.

Exploring the user interface can be beneficial when the user is motivated by the playful interaction. But the sheer number of interaction possibilities with a multitouch system makes it unlikely that the user will discover all of them, especially the more complex ones. The challenge for the user interface designer lies in creating a user interface that supports users in identifying the relevant gestures on their own.

Menu navigation on multitouch devices

Standard user interfaces mostly come equipped with a linear menu (also called pull-down menu)

Linear menu or pull-down menu

Navigating such a menu by using a mouse works well as soon as the user is familiar with the interaction paradigm. On touch screen systems on the other hand, a linear menu is not optimal, because much more screen space is required to fit the menu due to the larger contact are of the finger compared to the mouse pointer.

In addition, required movements would be larger with a finger (due to the required bigger target areas in the menu) compared with a menu that is used with the mouse. It is very inconvenient to move the hand over large areas of the screen whenever interaction with the menu takes place as this quickly becomes very tiresome, especially when displays become bigger and bigger. To avoid the phenomenon called “gorilla arm“, interaction has to be rethought.

The pie menu

Due to the trend towards touch-based interaction and because of the shortcomings of “classical” menus, we may well see the comeback of a menu, which, despite of its advantages over linear menus, has never made its breakthrough: the pie menu.

Shorter access times (Fitts’ Law), better orientation with all menu elements being equally far away from the center and support for gestures („muscle memory“) are some of the advantages of a pie menu over a linear menu on a system that is used with mouse and keyboard.

But the pie menu also has its drawbacks: because of its circular structure only a very limited number of menu items can be presented without the respective sections becoming so small, that they cannot be easily selected.

Pie menus for video games only?

The game industry has discovered pie menus a long time ago. Especially games that are based on speed benefit from pie menus. After frequent performance of a motor action, the brain encodes the respective movement in the motor memory, i.e. users simply know the position to which they have to move the mouse or the gesture to execute the action.

It is similar to typing on a keyboard. To novices the sheer number of keys makes selection slow, but the more the keyboard is used, the faster the intended keys can be reached and the less concentration is needed – for experts even without looking at the keyboard. Simply put: the use of pie menus is as intuitive and “un-unlearnable” as riding a bicycle.

Pie menu meets touch screen

However, if you want to use pie menus for interacting with a touch screen system, it does not suffice to simply replace the linear menu with a pie menu. Touch screens are fundamentally different from “classical” computer setups with traditional screens: is the mouse cursor is replaced by a finger, which covers a much bigger part of the screen.

This means, amongst other things, that the pie menu’s minimal size is larger than the one of a classic linear menu on a standard screen, which results in higher screen space demands. In addition, the number sectors in the pie menu restricted through the size of a fingertip. If you want to use simple gestures, it is recommendable to use a pie menu with 45° angles minimum, since the user can navigate to them precisely enough, so that the system can recognize them clearly. Bearing in mind these restrictions, you get a pie menu with a maximum of eight sectors. Considering further that the finger of an adult typically has a diameter of 16 to 20 mm (Saffer, D. Designing Gestural Interfaces, 2008), you will need a center point of the menu with a diameter of at least 18, better 20 mm.

Not only the finger itself covers menu elements, but also – and in particular – the used hand. Depending on perspective on the screen and its position, one or more sectors of the menu disappear under the hand – even if the ball of the hand does not directly lie on the screen.

Pie menu with closed design

Closed design vs. open design

No matter which position the hand occupies – within a closed pie menu design it is impossible for the user to see all elements at one time without lifting the hand.

An open design means, that the pie menu does not contain any areas that are covered by the hand or the finger. To achieve this, all menu sectors that cannot be seen without any problems, have to be removed. By this, you get an open design, which means that the menu roughly takes the form of a fan.

Pie menu with open design

Disadvantages of the open design

With an open design, all menu items are visible directly. There are some disadvantages to this approach, however: the number of available menu sectors inevitably reduced. According to our experience, in a pie menu with eight sectors, most right-handed users cover the three bottom right sectors (left-handed users cover the three arrays on the bottom left, respectively), which accordingly cannot be used. This results in a major restriction, depending on the menu’s field of application. If there are more actions available than sectors, additional sectors have to be made available in a different way.

Submenus

One way of offering more actions is to add submenus to the open pie menu. The sectors of the menu’s main level open submenus in the form of additional pie menus. If you stick with the open pie menu design, you get five possible actions with one level. With more levels, however, more different actions can be realized.

By this, new gestures are made possible. These do not solely consist of one movement in one direction, but include a change of direction. It is advisable not to use more than three hierarchy levels, though, since more levels result in very complex gesture sequences at the expense of the menu’s clarity.

Learning by doing

The pie menu skillfully circumvents the problems associated with gestures and touch screen systems. It is unlikely that users find out about all different interaction possibilities – especially the complex gestures or actions – by themselves, since there are so many. The pie menu constantly gives visual feedback, though, and indicates users the path to the intended action, while they are moving through the menu.

Gestures in the hierarchical pie menu

By this, gestures are discovered in a simple way: through “learning by doing”.

Conclusion

The latest developments and projects in the field of Natural User Interfaces show, that the technology recently is undergoing many changes. This opens up new possibilities, but to make use of them, a change in user interface design is needed: traditional and established interface elements have to be re-explored and supplemented or adapted, if necessary, to ensure optimal use on new systems.

The interaction with a touch display has to feel natural and must not demand large or complicated movements from the user. The pie menu on a touch screen minimizes the effort needed to choose an action and – because of the short distances – is easier to use than a drop-down menu. Additionally, it has the advantage that both novices and experts are supported to the same extent, while also offering the possibility to use the unique characteristics of touch screen systems, e.g. the gesture command.

With only little adjustments, pie menus can be employed in touch screen applications. They help the user to reach the intended results quickly and at the same time fulfill a very important rule: “Don’t make me think!” (Steve Krug)

A concrete design study for a pie menu can be found in another blog article.