Recently I gave a talk at the dotnet Cologne and also at the DWX Developer Week titled “4K and other challenges – Next Generation Desktop UIs for Windows 10”. The session discussed the term Universal App Platform in Windows 10 and showed what a developer can make out of it in order to create future oriented user interfaces. This blog article is not only supposed to target those who attended my session, but also those who were not present to hear it. Moreover the article will provide further information to the topic. As in the session there will be a coding part at the end where some new Universal App features are shown.

Retrospection of Windows 8

Windows 8 Start screen (Reference: Microsoft News Center)

One UI to rule them all may have been the first impression Windows 8 made to most of its users back in 2012. The touch oriented, flashy tile interface and the missing start menu seemed to be a major break in consistency for many users. However there was a lot going on behind the scenes. In accordance to the Platform Convergence Journey the windows kernel was unified for Xbox, Windows 8, and Windows Phone 8 with introduction of Windows 8. Windows 8.1 then brought together the app model. Even then, under the term Universal App, it was possible – although a bit cumbersome – to develop a Windows Store App with a single app identity and the same codebase for desktops and smartphones.

Platform Convergence Journey (Reference: Screencapture Microsoft Virtual Academy)

The Windows 10 Philosophy

Windows 10 desktop and start menu (Reference: Microsoft News Center)

At first sight it appears as though Windows 10 takes a step backwards. From the viewpoint of a desktop user that may be right. Windows Store Apps are able to run in a window, there is a classical start menu in combination with some tiles, and the touchscreen interaction is not assumed as the default. The UI is only adapted to this if Windows is running in tablet mode. But from the point of view of a developer Microsoft is consequently targeting the unification: in future there will be no separation of Windows versions for different devices anymore. The Windows 10 will run on all devices, be it a smartphone, desktop, Xbox, Surface Hub, or even HoloLens or IoT devices like the RaspberryPi. On the so-called Universal Windows Platform a future Windows App will likewise run on all devices – like in the true meaning of write once, deploy everywhere.

Universal Windows Platform (Reference: Microsoft Blog)

Hence the developer will create one app – a truly, single binary – which will be deployed and run across all devices. However an application should by no means appear the same on all devices. In fact the developers are supposed to adapt the UI for the different devices, be it a smartwatch, a mobile line-of-business app, a desktop application or a UI for controlling a refrigerator in the internet of things. By using the MVVM Pattern a clean separation of concerns is feasible. Because of that the internal business logic can be isolated from the interchangeable, customizable and highly optimized presentation layer.

MVVM: Model-View-ViewModel software architecture

Adaptive UIs

What does such a customization look like? What has to be adjusted for different devices and where? Which tools are available to the developer to adjust the UI? How can different device families technically be filtered and customizations made according to this information?

The most intuitive component when thinking about different devices may be the form factors, like display size, resolution and the resulting physical – that is the one given by the device – pixel density. And in connection with that the distance between the user and the device – the view distance. To shortly summarize this: a higher physical resolution on a smaller physical diagonal display size results in a higher pixel density also known as PPI (pixel per inch). This is even more important when the viewer is very close to the device. Therefore high density displays for smartphones are rather the rule than the exception. High PPI can be used to either fit more content into the same space – which only makes sense to a certain degree – or to display the same content in higher quality. Circular shapes, that are always approximations on digital devices, will come closer to their ideal representation. The user interface of the application will appear crisp and smooth to the user.

Often it is not enough to only focus on these factors. Coming from the web development, Responsive Design can be used to differentiate between desktops and smartphones based on the resolution. A two column layout will be made into a one column layout, only the most relevant content will be displayed or moved to second levels of navigation. This is a first step that quickly reaches its limits. Responsive Design is a rather technical consideration that only reacts to device parameters. If the specific user role and the usage scenario is also considered during the UX design process, one will achieve remarkably better, and more intuitive results. This primary consideration of the user is called Contextual Design.

Contextual Design

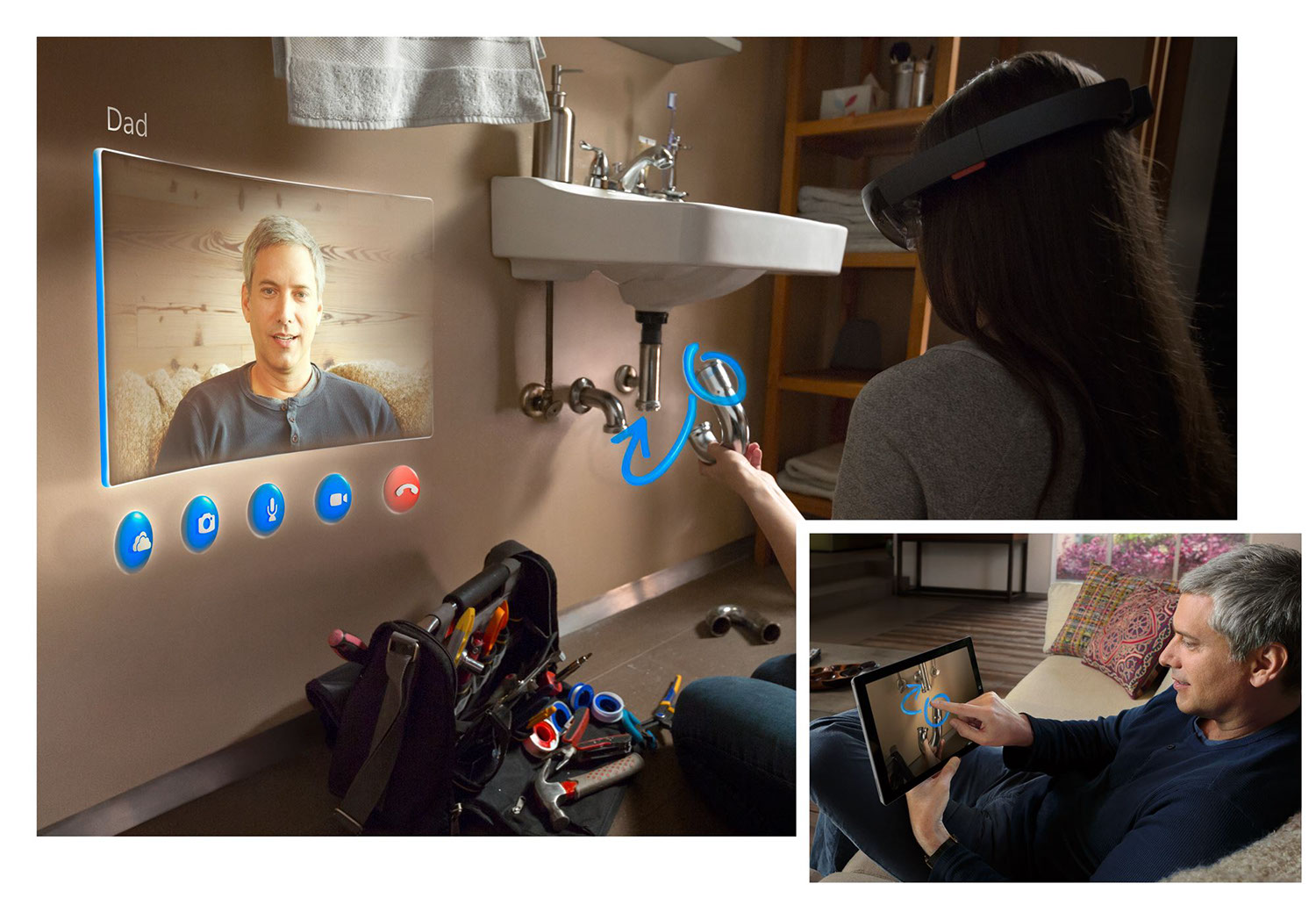

Microsoft HoloLens (Reference: Microsoft News Center)

Virtual reality and augmented reality applications are the best examples to illustrate this, since they greatly benefit from Contextual Design. Because, what does the term resolution mean for this device family? A high resolution for a VR-glass is in the first place important in order to avoid the so-called Screen-door effect. This is due to the small distance between the display and the user’s eye. However, what is even more important is where the UI is placed inside the 3D scene of the VR- or AR-application, because this will have an impact on the perceived resolution. UI elements could thereby be placed in the real world like recently demonstrated in Microsoft’s keynote talk of the Build conference 2015. It is hard to tackle this challenge with classical Responsive Design.

Microsoft HoloLens: Concept for an augmented reality application (Reference: Microsoft News Center)

Though apart from VR or AR, it is already sufficient to simply take a look at the desktop – meaning a stationary computer – be it an office pc, a conference monitor or a huge screen in a space station’s control room. In these situations one will quickly end up in scenarios where Responsive Design is not enough.

Control room of the esa space station (Reference: esa Homepage)

Also, there are some developers who work with their screens in portrait mode. Are there applications that react to whether the monitor is rotated 90 degrees? Pair-programming sessions or code reviews in a walkthrough manner include multiple persons in front of a single display. Some people are closer to the screen than others. Which applications take these scenarios into consideration?

A typical desktop?

Microsoft’s Surface Hub is a huge 84 inches conference screen with multiple cameras, directed microphones and pen input. The presenter stands right beside the screen and can write directly onto the application, while viewers sit in quite a distance away, and some attendees are only connected via remote while still seeing the same content on their local computer. So far, I have not come across any meeting application that is – without major adjustments – optimized for this scenario.

Microsoft Surface Hub in meetings (Reference: Microsoft News Center)

Universal Apps targeting a broad audience will be faced with these kinds of challenges, which can only be solved by Contextual UI Design. In doing so, an application will know its usage scenario and will be able to react accordingly. The view distance can e.g. be detected through eye-tracking sensors on the device. An app will know whether it is in portrait mode and will be able to optimize its content for this scenario. Only if a device has a microphone as input type, the push-to-talk functionality will be visible. There are numerous challenges that can only be solved properly if the user is taken into account. From a technical perspective it seems like very hard additional work. Processing eye-tracking information and reacting with layout and content optimizations seem to be a herculean task. However Windows 10, Visual Studio 2015 and the Universal App Platform support the developer with new features to create context sensitive software with great user experience. We will now focus on some of these tools.

UWP Features in Windows 10

Like the device independent pixels in WPF, Microsoft continues to focus on a term for abstract pixel information and introduces so-called effective pixels. These are based on the pixel density of the device and a heuristic view distance. It results in abstract pixel information, so that a developer has to care less about physical details. Elements appear the same size to the user across all devices.

Furthermore the RelativePanel is a new layout container intended to structure elements inside this container in relation to each other. This is extremely powerful when the layout is supposed to change and adapt dynamically. Prior to that you had to implement a custom panel or make complex adjustments to a Grid.

In order to react to different device families and form factors, we will now take a look at the so-called VisualState-Triggers. Microsoft proposes to use the VisualStateManager and VisualStates for different view states.

Implementation sample: A custom VisualState-Trigger

Let’s imagine the following scenario: The user owns a monitor which is able to measure the current view distance (e.g. via webcam, a head tracking system, infrared, the Kinect etc.). As a developer I want my application to adjust its font size according to the view distance. The font size should always appear the same size to the users. They could be very close to the screen (because they may take notes with a pen on a touch sensitive display), in a normal view distance, or rather further away while having a pair-session. As a UI developer I have to technically detect these changes to finally respond to them in XAML in an easy way. We will now implement this while not focusing on crafting a beautiful and complete application. In fact, we will focus on demonstrating the newly available features of the platform.

First of all we will create a new Windows Universal App project, open the MainPage.xaml and place some text in it. At the same time we introduce two visual states as a showcase for the near and far view distance:

<Grid Margin="25" Background="White"> <VisualStateManager.VisualStateGroups> <VisualStateGroup x:Name="CommonStates"> <VisualState x:Name="Near"> <!-- Trigger and reaction missing for now. --> </VisualState> <!-- Here could be more states … --> <VisualState x:Name="Far"> <!-- Trigger and reaction missing for now. --> </VisualState> </VisualStateGroup> </VisualStateManager.VisualStateGroups> <TextBlock x:Name="Output" FontSize="20" Text="Lorem ipsum dolor sit amet, consetetur…" TextTrimming="CharacterEllipsis" TextWrapping="Wrap" /> </Grid>

Currently Microsoft only ships the AdaptiveTrigger. This trigger can only react to the screen size (in effective pixels). So, at the moment there is no built-in feature to fulfill our requirements. Fortunately we can write our own trigger and use it in XAML. Let’s look at the following example: as soon as the view distance is less than 12 inches we want to switch to our near state. We can represent this as a trigger in the following way:

<VisualState x:Name="Near"> <VisualState.StateTriggers> <triggers:ViewDistanceTrigger MaxViewDistance="12" /> </VisualState.StateTriggers> <!-- What to do? --> </VisualState>

This trigger must now be implemented. Therefore you can inherit from StateTriggerBase and use the method SetActive to (de)active the trigger. The VisualState defined in XAML becomes active as soon as SetActive(true) is called. The trigger could for instance use a component called IViewDistanceDetector, which is responsible for detecting change of the view distance:

public class ViewDistanceTrigger : StateTriggerBase { public double MinViewDistance { get; set; } = 0; public double MaxViewDistance { get; set; } = double.PositiveInfinity; private IViewDistanceDetector viewDistanceDetector; public ViewDistanceTrigger() { viewDistanceDetector = new ViewDistanceDetector(); viewDistanceDetector.ViewDistanceChanged += ViewDistanceDetector_ViewDistanceChanged; } private void ViewDistanceDetector_ViewDistanceChanged (object sender, ViewDistanceChangedEventArgs eventArgs) { double currentViewDistance = eventArgs.ViewDistanceInInch; if((currentViewDistance >= MinViewDistance) && (currentViewDistance <= MaxViewDistance)) { SetActive(true); } else { SetActive(false); } } }

To keep it simple, we will only change the font size of our TextBlock. With help from the new VisualState setters it is easy to apply discrete changes to the values without a complex definition of Storyboards. This feature is very handy for initial state changes:

<VisualState x:Name="Near"> <VisualState.StateTriggers> <triggers:ViewDistanceTrigger MaxViewDistance="12" /> </VisualState.StateTriggers> <VisualState.Setters> <Setter Target="Output.FontSize" Value="{StaticResource FontSize.Small}" /> </VisualState.Setters> </VisualState> <VisualState x:Name="Far"> <VisualState.StateTriggers> <triggers:ViewDistanceTrigger MaxViewDistance="25" /> </VisualState.StateTriggers> <VisualState.Setters> <Setter Target="Output.FontSize" Value="{StaticResource FontSize.Large}" /> </VisualState.Setters> </VisualState>

Of course an animated, smooth transition and more states inbetween would be nice. However we only wanted to show how to write custom triggers in a Universal App to make adaptive and context sensitive changes to the UI in a compact way. It is clear that this concept can be expanded to a variety of contextual information: is the screen in portrait mode? Do I have a microphone for speech input? Is an Xbox controller conntected to my device? Is my application running on a specific device family? All this information could comfortably be stored inside triggers. It is to be hoped that Microsoft will provide more built-in triggers that will release the developer from the burden to write his own ones.

Conclusion

As we have just seen, Windows 10 and the Universal Windows Platform will provide us with a couple of aids to optimize the UI for different devices. Microsoft is responsible for doing that as they promise that a single app will run across all devices. Having that said, these tools can also be utilized to enhance the experience of desktop applications. Insights we gained while developing smartphone UIs for different user scenarios and roles can be transformed to the desktop world. Even in fixed desktop environments there is a variety of contexts and diverse possibilities for interaction. We have to react to all those factors in a proper way. Concerning the UX design the desktop hasn’t come to an end yet. There still is a wide range of innovative concepts hidden that should be explored and eventually used to create astonishing applications.

Download

If you would like to take a closer look at my presentation slides, code samples from the DWX or code snippets from this blog, you can do so:

- PDF: Presentation slides: Next Generation Desktop UIs for Windows 10

- ZIP: Code samples from the DWX

- ZIP: Code Snippets from this blog